Facebook and Instagram AI Bone Analysis: The Privacy Nightmare Every Gamer Parent Needs to Know

Meta just dropped some seriously dystopian tech news that's got me questioning everything. Facebook and Instagram are now using AI to analyze kids' bone structure in photos to determine if users are actually under 13. Yeah, you read that right. They're literally looking at children's bones through their profile pics.

This isn't some sci-fi movie plot. This is happening right now on platforms where millions of gamers share their clips, screenshots, and gaming setups daily. And honestly? The implications for gaming families are way bigger than most people realize.

What Meta's AI Bone Analysis Actually Does

Let's break down this wild gaming technology development. Meta's new AI system scans every photo and video uploaded to Facebook and Instagram, looking for what they call "general themes and visual cues." But here's where it gets weird – the system specifically analyzes facial bone structure to estimate age.

Think about it. Every gaming screenshot with your face visible. Every victory celebration selfie. Every family photo at a gaming convention. All getting run through bone structure analysis by an AI that's trying to figure out how old everyone in the frame is.

The goal? Catching kids who lie about their age to create accounts. Meta says this helps them enforce their 13-and-over policy more effectively than traditional methods.

Why Traditional Age Verification Failed

Tbh, the old system was pretty busted. Kids would just lie about their birth year – not exactly rocket science to fake being born in 2010 instead of 2012. Meta's previous detection relied mostly on user reports and obvious behavioral patterns.

But now? They're getting way more invasive. And for gaming families, this creates some seriously uncomfortable questions about privacy.

The Gaming Family Privacy Problem

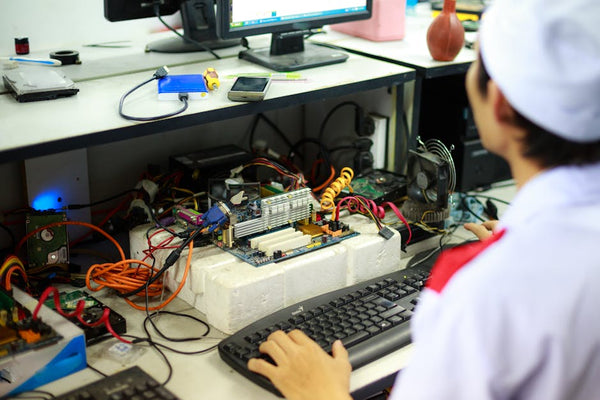

Here's where this hits close to home. Last week, I was helping a customer at our shop here in Orange, TX set up a new gaming rig for his 12-year-old son. Kid's already a beast at Fortnite, better than most adults I know. But now this family has to worry about Meta's AI analyzing their child's facial structure every time they post gaming content together.

Gaming content is inherently social. We share our setups. We post victory screenshots. We celebrate tournament wins with family photos. But Meta's bone analysis means every single photo with a child potentially triggers algorithmic scrutiny.

What happens when the AI gets it wrong? Because it absolutely will get it wrong sometimes. Facial recognition and age estimation tech isn't perfect, especially when you're dealing with lighting conditions in gaming rooms or the weird angles we use for setup photos.

The False Positive Nightmare

Imagine this scenario: Your 14-year-old just hit Champion rank in Rocket League. Huge achievement, right? You post a celebration photo together. Meta's AI flags your teen as potentially under 13 because of bone structure analysis. Suddenly their account is under review.

Now they can't access their gaming communities. Can't share clips with friends. Can't participate in the social aspects of gaming that make victories meaningful. All because an algorithm made a mistake about their cheekbones.

"The system analyzes facial bone structure to estimate age, potentially affecting every family gaming photo shared on these platforms."

What This Means for Gaming Content Creators

Hot take: This is going to mess with gaming content creation in ways Meta probably didn't consider. Young gamers who create content often appear in their own videos and streams. Many gaming families run YouTube channels or Instagram accounts showcasing their gaming setups and achievements.

Now every piece of content gets the bone analysis treatment. Every thumbnail. Every celebration video. Every family gaming moment becomes data for an AI system that's trying to determine ages based on facial structure.

The chilling effect is obvious. Parents might stop sharing gaming achievements with their kids. Young content creators might avoid showing their faces entirely. The social aspect of gaming – which is huge for building communities and friendships – takes a hit.

The Competitive Gaming Angle

Here's something that really grinds my gears: competitive gaming relies heavily on social media presence. Young esports players build their reputations through Instagram posts, Facebook gaming groups, and shared tournament photos.

But now? Every photo from a local tournament or gaming event gets fed through bone structure analysis. These kids aren't doing anything wrong – they're pursuing legitimate competitive gaming careers. But Meta's AI treats them like potential policy violators based on their physical appearance.

That's not just invasive. It's discriminatory. Some people look younger or older than their actual age. Should your gaming opportunities depend on how old an AI thinks you look?

The Bigger Privacy Picture

Personally, I think this crosses a line that tech companies shouldn't cross. Analyzing children's bone structure goes beyond reasonable content moderation into territory that feels genuinely creepy.

And let's be real about the scope here. Meta isn't just analyzing photos of suspected underage users. They're analyzing EVERY photo uploaded to their platforms. That's billions of images daily, including countless gaming-related posts from adults who never consented to having their bone structure evaluated by AI.

The gaming community values privacy and user control more than most. We understand the importance of protecting personal information. But Meta's new system operates without explicit consent and provides no opt-out mechanism.

Data Storage and Security Concerns

What happens to all this bone structure data? Meta hasn't been crystal clear about storage, retention, or potential sharing with law enforcement. Given their track record with user privacy, that's concerning.

Gaming setups often include expensive hardware. Build your custom gaming PC with BitCrate and you're looking at thousands of dollars in equipment. The last thing gamers need is additional surveillance that could potentially compromise security.

Are we really comfortable with a company that's had multiple major privacy breaches now storing biometric analysis of our facial bone structure? The risk-benefit calculation doesn't add up.

What Gaming Families Should Do

Look, I'm not saying delete your Instagram account and go off-grid. But gaming families need to think differently about what they share and how they share it.

Consider alternatives for sharing gaming achievements. Discord servers, private gaming communities, or direct messaging might be better options for family gaming content. You maintain the social aspect without feeding Meta's bone analysis machine.

If you do continue using Facebook and Instagram for gaming content, be aware that every photo with people in it now gets this treatment. That awareness should inform your sharing decisions.

Most importantly, have conversations with young gamers about digital privacy. This isn't just about Meta – it's about understanding that every platform makes choices about how to analyze and use our data.

The Industry Response Gap

Honestly, the gaming industry's silence on this issue is disappointing. Major gaming companies partner heavily with Meta for marketing and community building. Where's the pushback on behalf of their youngest players?

Gaming should be about skill, creativity, and community. Not about whether an AI thinks you look old enough to participate. The industry needs to speak up about protecting young gamers' privacy rights.

This bone analysis system represents a fundamental shift in how tech companies view and treat users. Today it's age verification. Tomorrow it could be emotional state analysis, health condition detection, or who knows what else.

The gaming community has always been ahead of the curve on digital rights issues. We understand technology better than most. We need to use that knowledge to push back against invasive practices that treat us like data points rather than people.

Meta's bone structure AI isn't just creepy tech news – it's a preview of a future where our physical characteristics become constant subjects of algorithmic judgment. That's not the kind of gaming world any of us should accept.

Leave a Comment